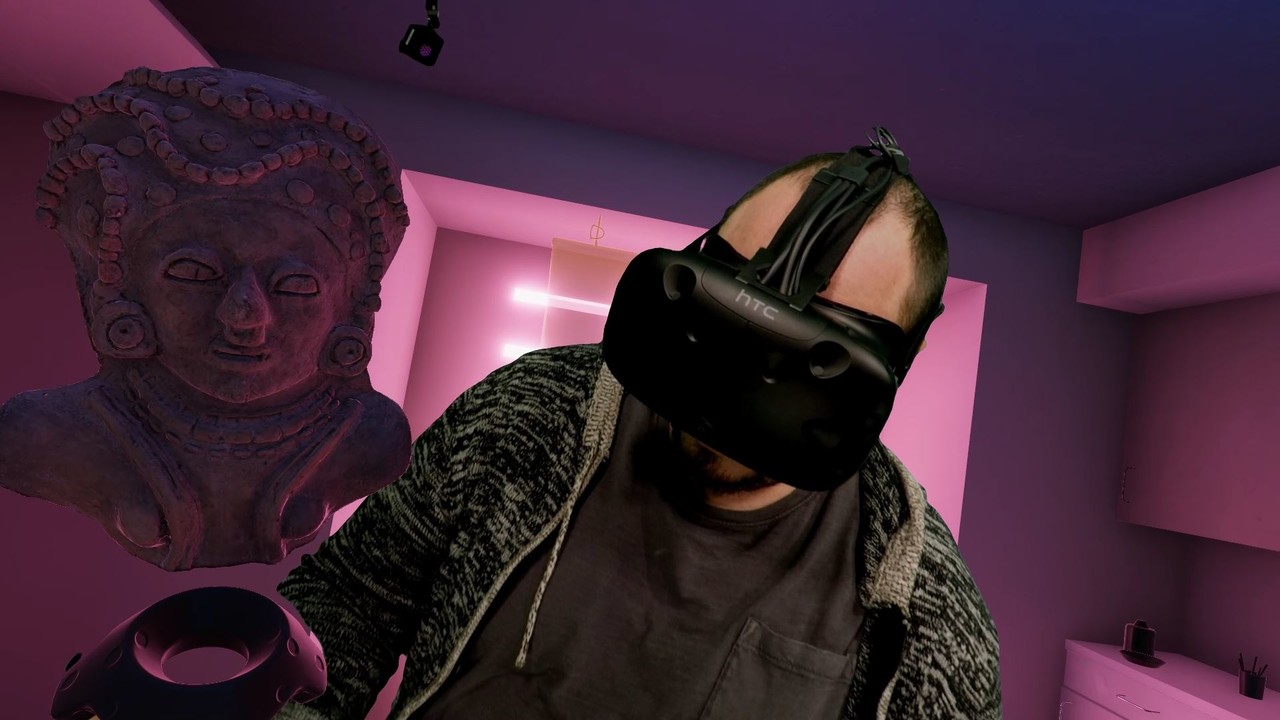

Earlier this week I posted some quick test images of a past personal project with ray tracing enabled in Unreal 4.22. Today I wanted to share some before and after images. I've got some comparison gifs and new screenshots taken at 3440x1440. Please note, this was all completed on a Pascal series GTX 1070ti. Meaning I did not have the support of the new RTX cores and my results should not be indicative of actual RTX powered raytracing in Unreal Engine 4.22.

*It seems art station down scales the resolution of my 3440x1440 image uploads. If anyone is interested in the full resolution images please let me know in the comments below.

Setting up Ray Tracing in Unreal Engine 4.22

Raytracing in Unreal Engine 4.22 on a non RTX GPU requires Windows 10 version 1809 and Nvidia Geforce drivers 425.31. To verify what version of windows 10 you have installed hit the windows key and type "about your pc" then hit enter. This will bring up a window where you can verify your OS version in the "Windows Specifications" section.

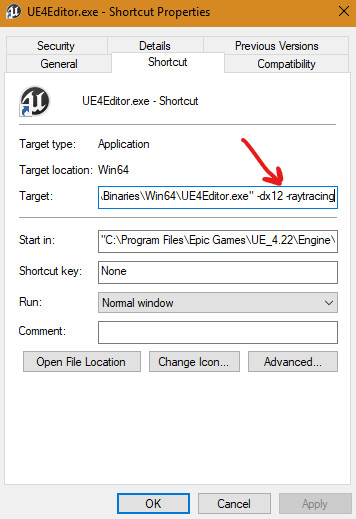

Assuming you have Unreal Engine 4.22 installed, next you want to create a shortcut of the editor.exe and add the following to target path:

-dx12 -raytracing

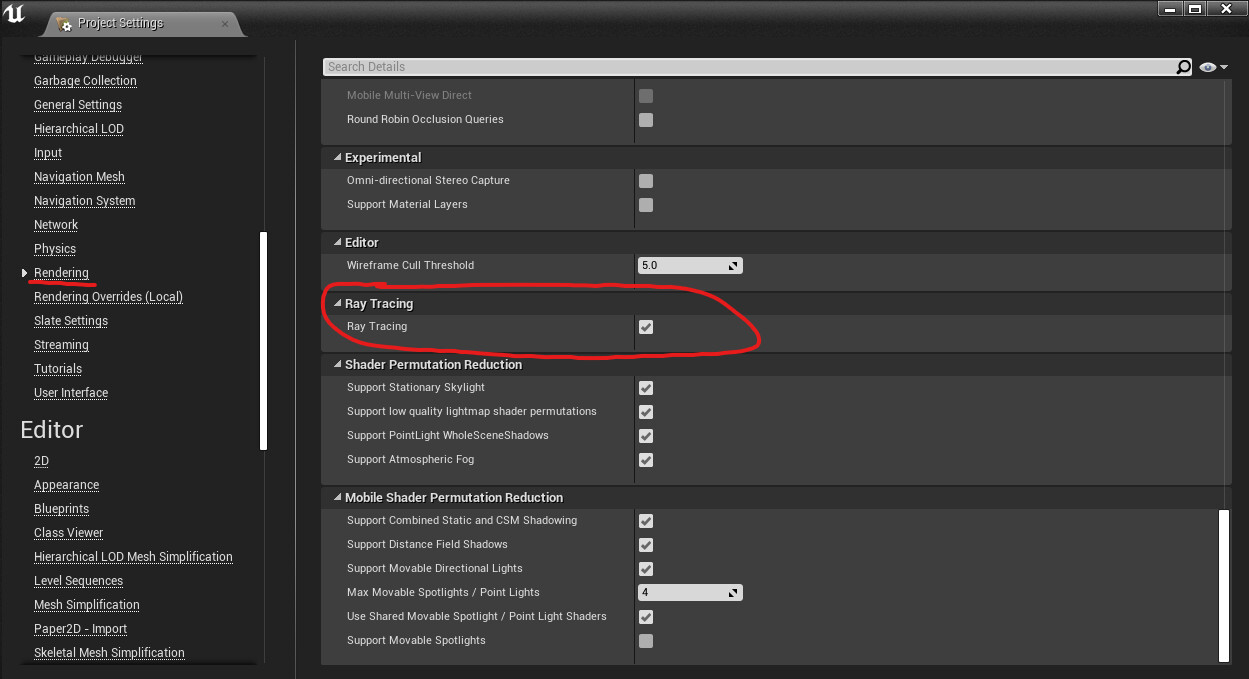

The above shortcut will force unreal to launch using windows DXR and supporting raytracing features. Once in engine you want to make sure ray tracing is enabled in your projects render settings. This will require an editor restart and recompile of all shaders, distance fields, etc.

Once you're back in editor after completing the above steps, you should know raytracing is active when your editor FPS plummets!

Project console variables and further setup

Before continuing, a great ray tracing console variable PDF put together by Epic can be found here. The PDF also goes into further detail on getting ray tracing up and running.

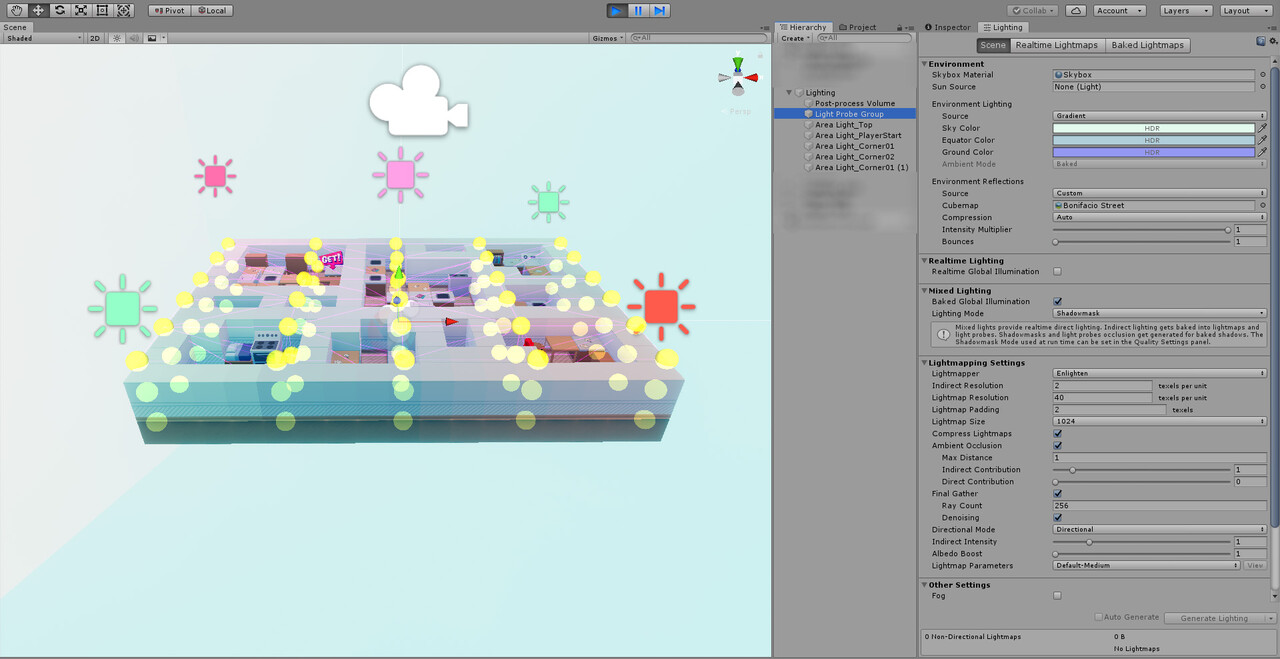

When I got my scene up and running first thing I noticed was my lighting looking, well, like hot garbage. This is because by default ray traced reflections is enabled, therefore all reflection captures are useless. Once I deleted all reflection captures the scene looked recognizable.

Here are the console variables I enabled and tweaked for screen grabbing purposes. PLEASE NOTE: I tweaked these settings for the soul purpose of saving high resolution screenshots from the editor. I cannot attest to how these settings will perform on your rig or scene. Moreover, I didn't get around to adjusting the denoiser settings.

r.RayTracing.EnableMaterials 1 r.RayTracing.Reflections 1 r.RayTracing.Shadows 1 r.RayTracing.AmbientOcclusion 1 r.RayTracing.GlobalIllumination 1 r.RayTracing.GlobalIllumination.SamplesPerPixel 2 r.RayTracing.GlobalIllumination.MaxBounces 2 r.RayTracing.GlobalIllumination.EvalSkyLight 1 r.RayTracing.Reflections.MaxBounces 2 r.GlobalIllumination.Denoiser.ReconstructionSamples 64 r.GlobalIllumination.Denoiser.HistoryConvolution.SampleCount 64

PRO TIP:

Scene navigation a pain in the ass after getting ray tracing sorted? Set your editor window view mode to Ray Tracing Debug>BaseColor. Enjoy high frame rates for navigating around your scene! Swap back to lit when ready for ray traced goodness.

Ray Traced Screenshots & Comparisons

Most noticible to me were the more accurate reflections, secondary light bounce thanks to global illumination, and incredible contact shadows thanks to ray traced ambient occlusion.

In closing

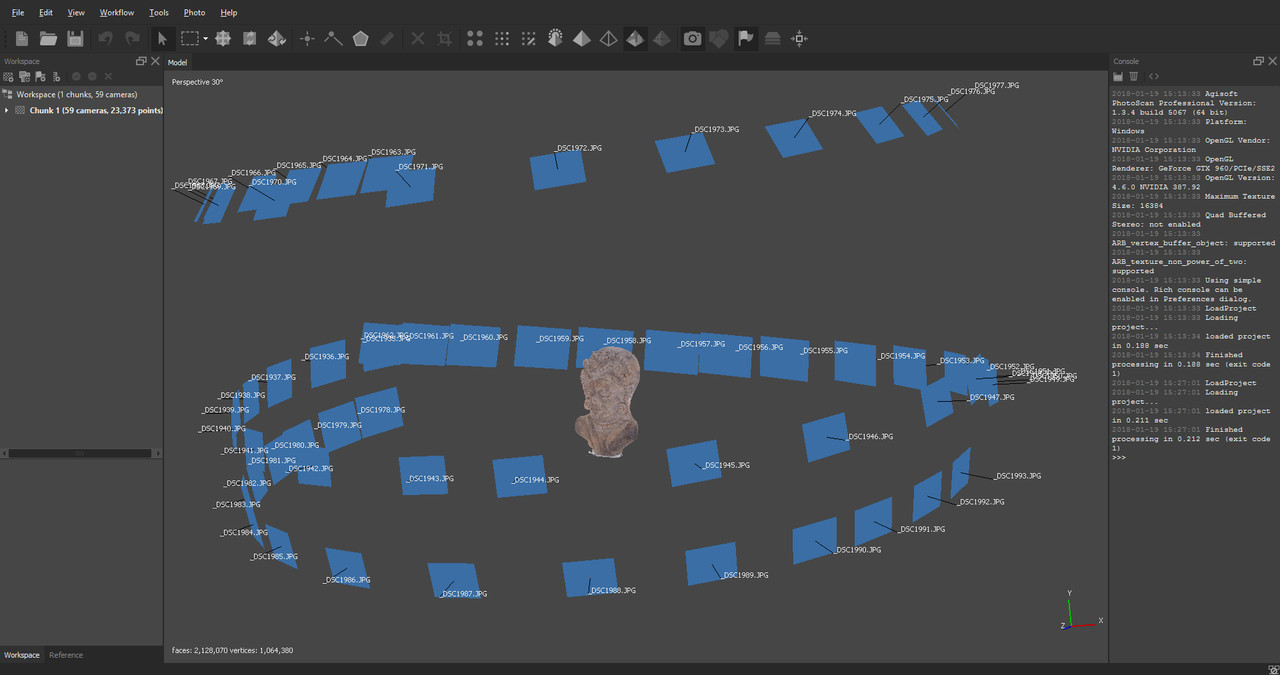

I'd love to see how this performs on an RTX card some day. Additionally I need to spend more time to figure out how to tweak the denoising settings. My next personal project currently in progress will go through a similar comparison between ray tracing and non ray tracing setups.

Please feel free to ask questions in the comments down below, thanks for reading.

-Jon